background

In the project described in Ernst, M.O., Banks, M.S., Bülthoff, H.H. (2000) Touch can change visual slant perception. Nature Neuroscience, 3, 1, 69-73. [ABSTRACT] [PDF], we asked whether touch feedback can be used to alter the weight the visual system gives to different depth cues. We created virtual visual surfaces whose 3d orientation was specified by two cues: binocular disparity and the texture gradient (a monocular depth cue). The two cues specified slightly different orientations. We first tested for each subject how much weight they gave to disparity as opposed to texture. Then we exposed them to a 45-minute session in which the touch feedback was consistent with one cue and not the other. Then we tested them again to see if they had learned to give more weight to the visual cue that had been reinforced through touch. The results showed that they had done just that.

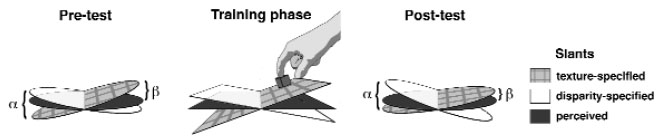

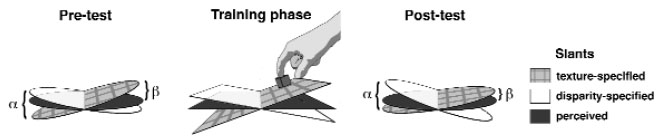

The pre- and post-tests were purely visual tasks. The visual plane had different texture- and disparity-specified slants. The texture-specified slant is represented by the gray grids and the disparity-specified slant by the light gray planes. The angle between the two specified slants is the conflict angle α. The perceived slant is represented by dark gray planes. β is the slant specified by the texture gradient when the plane (with texture- and disparity-specified slants generally differing) was perceived as frontoparallel. The decrease in β between pre- and post-test indicates an increase in texture weight. The haptic training phase occurred between the pre- and post-tests and consisted of visual and haptic stimulation. The cube, surface and targets could all be seen and felt.

the apparatus

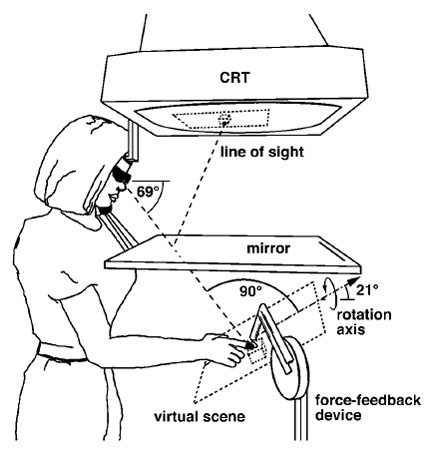

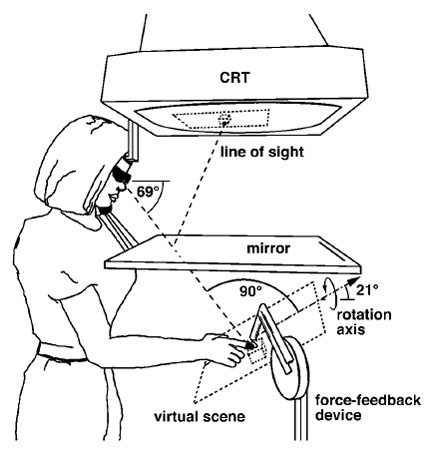

The visual stimulus was generated on a cathode ray tube (CRT) and viewed in a mirror that obscured the subject’s hand. A dot on the screen indicated finger position. The haptic stimulus was created by a force-feedback device. The three dimensional position of the fingertip was monitored, and an appropriate force was applied to the tip when it reached the position of the simulated haptic objects, creating a compelling sensation of touching a solid surface and cube. The haptic stimulus included realistic simulation of gravity’s effect on the cube’s motion across the plane. The haptic stimulus also simulated friction: it was low between the cube and plane and higher between the fingertip and cube. The subject's line of sight was perpendicular to the rotation axis. A chin and forehead rest limited head movements.

the results

We found that haptic feedback can change subsequent visual percepts by changing the weights given to different sources of visual information. In both the pre- and post-tests, observers set the percieved angle somewhere between the texture-specified slant and the disparity-specified slant, indicating that both signals affected performance. However, after haptic training, settings were consistently different from pre-test settings. When haptic feedback was consistent with the texture-specified slant, the average weight assigned to the disparity-based estimator decreased. This means that a stimulus that appeared frontoparallel in the pre-test appeared slanted in the direction specifed by texture in the post-test. The opposite affect occured when haptic feedback was consistent with the disparity-specified slant. This shows that the visual system uses sensorimotor feedback to adjust the weights assigned to different slant estimators.